Google just introduced Gemma 4, the newest evolution of its lightweight model family. If you’re considering it for your stack, use this practical checklist to validate fit, plan deployment, control costs, and ship safely.

Source: Google’s announcement of Gemma 4 (official blog).

When a small model like Gemma 4 makes sense

- Low-latency or on-device experiences where speed and privacy matter.

- Cost-sensitive workloads with predictable prompts and output lengths.

- Domain-heavy tasks boosted by retrieval (RAG) or light fine-tuning.

- Data residency or safety constraints that limit use of very large models.

Quality signals to check before you commit

- Task-relevant evals: coding (HumanEval-like), reasoning (MMLU-style), retrieval (MTEB), multimodal if applicable. Demand numbers on your use case, not just leaderboards.

- Context window + function/tool use: ensure it matches your orchestration pattern and latency targets.

- Hallucination and safety: red-team prompts for your domain; measure refusal, jailbreak resistance, and unsafe content rates.

- Operational track record: model cards, licenses, and update cadence.

For independent benchmarking perspective, see MLCommons MLPerf Inference and evaluation practices like Stanford CRFM’s HELM.

Deployment paths

- Managed APIs: fastest to ship; trade some control for reliability and tooling.

- Self-hosted inference: use vLLM for high-throughput serving with paged attention and efficient batching.

- Local developer workflows: Ollama for quick iteration, prototyping, and edge trials.

- On-device: quantized variants for mobile/embedded; verify memory footprint and thermal constraints.

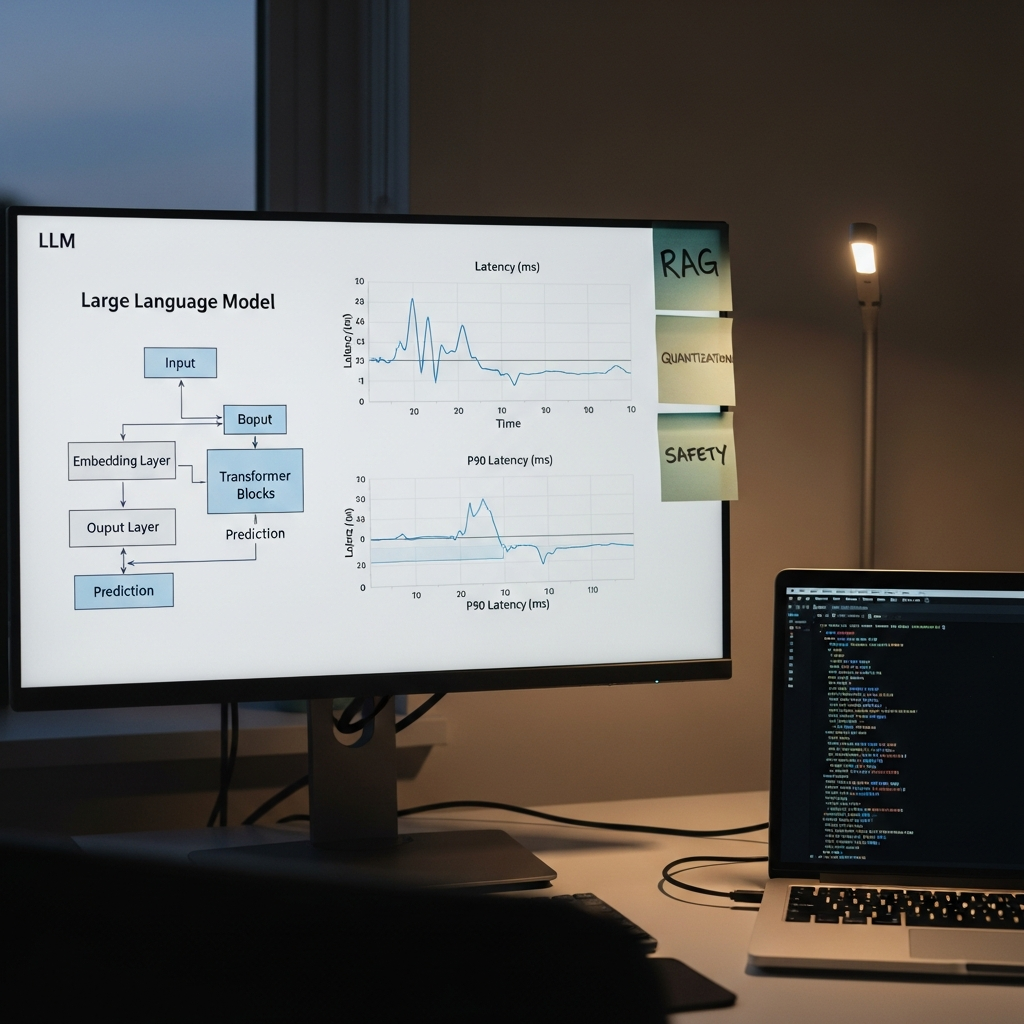

Optimization playbook (to hit latency and cost goals)

- Quantization: INT8/INT4 to shrink memory and speed up inference with minimal quality loss.

- Speculative decoding: pair a small draft model with the target model to cut per-token latency.

- KV cache reuse and batching: maximize throughput under traffic bursts.

- Prompt engineering: shorter, structured prompts; system instructions over few-shot when possible.

- Light fine-tuning (LoRA/QLoRA): align on tone and domain without full retraining.

Safety and governance must-haves

- Input/output filtering and PII handling with robust logging.

- Red teaming and eval suites specific to your use case and jurisdictions.

- Human-in-the-loop for high-risk actions; clear escalation paths.

- Map controls to a framework like the NIST AI Risk Management Framework.

Cost math that actually matters

Total cost = model cost per 1M tokens × tokens per user × monthly active users + infra (GPU/CPU, memory) + engineering + evals. Lower cost by shrinking prompts, enabling streaming, batching, and caching retrieved context.

7-day quick-start plan

- Day 1: Define success metrics and gold datasets for your top 2–3 tasks.

- Day 2: Prototype with a managed endpoint; baseline latency, quality, and spend.

- Day 3: Try self-hosting (vLLM) with quantization; compare throughput.

- Day 4: Add retrieval; compare zero-shot vs. RAG for accuracy and context cost.

- Day 5: Run safety tests; add filters and rate limits.

- Day 6: Optimize prompts and enable batching/streaming; re-measure.

- Day 7: Ship an internal pilot; collect human feedback and iterate.

Sources

- Google: Introducing Gemma 4

- MLCommons: MLPerf Inference benchmarks

- Stanford CRFM: HELM evaluation framework

- vLLM project: High-throughput LLM serving

Takeaway

Small models shine when latency, cost, and control are non-negotiable. Validate with your data and constraints first—then optimize aggressively for speed, safety, and spend.

Like this? Get one practical AI nugget in your inbox each week—subscribe to our newsletter: theainuggets.com/newsletter.